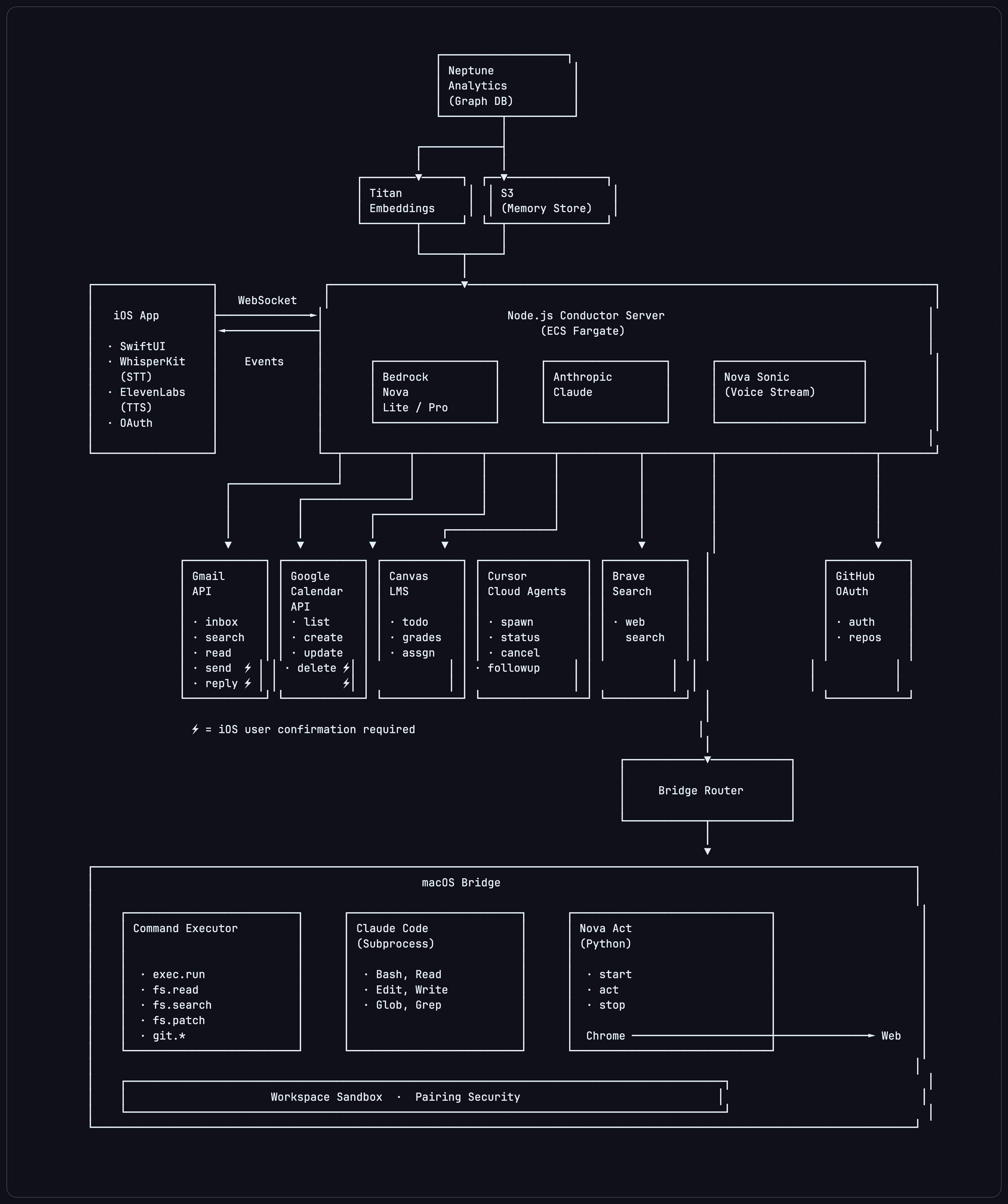

System architecture

The product is split across an iPhone-native client, a TypeScript conductor, and a paired macOS bridge so voice stays natural while privileged local execution stays gated.

Voice-First AI Assistant

The assistant that lives on your iPhone and reaches your Mac when the work gets real.

Why It Stands Out

Abyss is designed to feel lightweight on the phone while still being able to escalate into real work across coding, productivity, and secure local execution.

Flagship Workflow

Abyss starts on the iPhone, then escalates into real development work through Cursor Cloud Agents, terminal execution, file operations, git workflows, and browser automation on a paired Mac.

Personal Assistant

The same assistant that can kick off coding work can also triage Gmail, manage Google Calendar, check Canvas, search the web, and keep the conversation readable with inline result cards.

Security

Abyss does not pretend trust is free. Risky local actions live behind a separately paired macOS bridge with workspace boundaries, capability toggles, and explicit confirmation patterns for sensitive mutations.

Continuity

Abyss supports multi-chat sessions, inline transcript cards, conversation summaries, user preferences, and optional long-term memory infrastructure so the assistant can pick up real threads over time.

Judge Demo Story

The strongest Abyss demo path is simple: speak to the iPhone, trigger a useful assistant task, then step up into a permissioned coding workflow on the paired Mac. The product story is not just “voice chat,” it is voice as the front door to secure execution.

Architecture

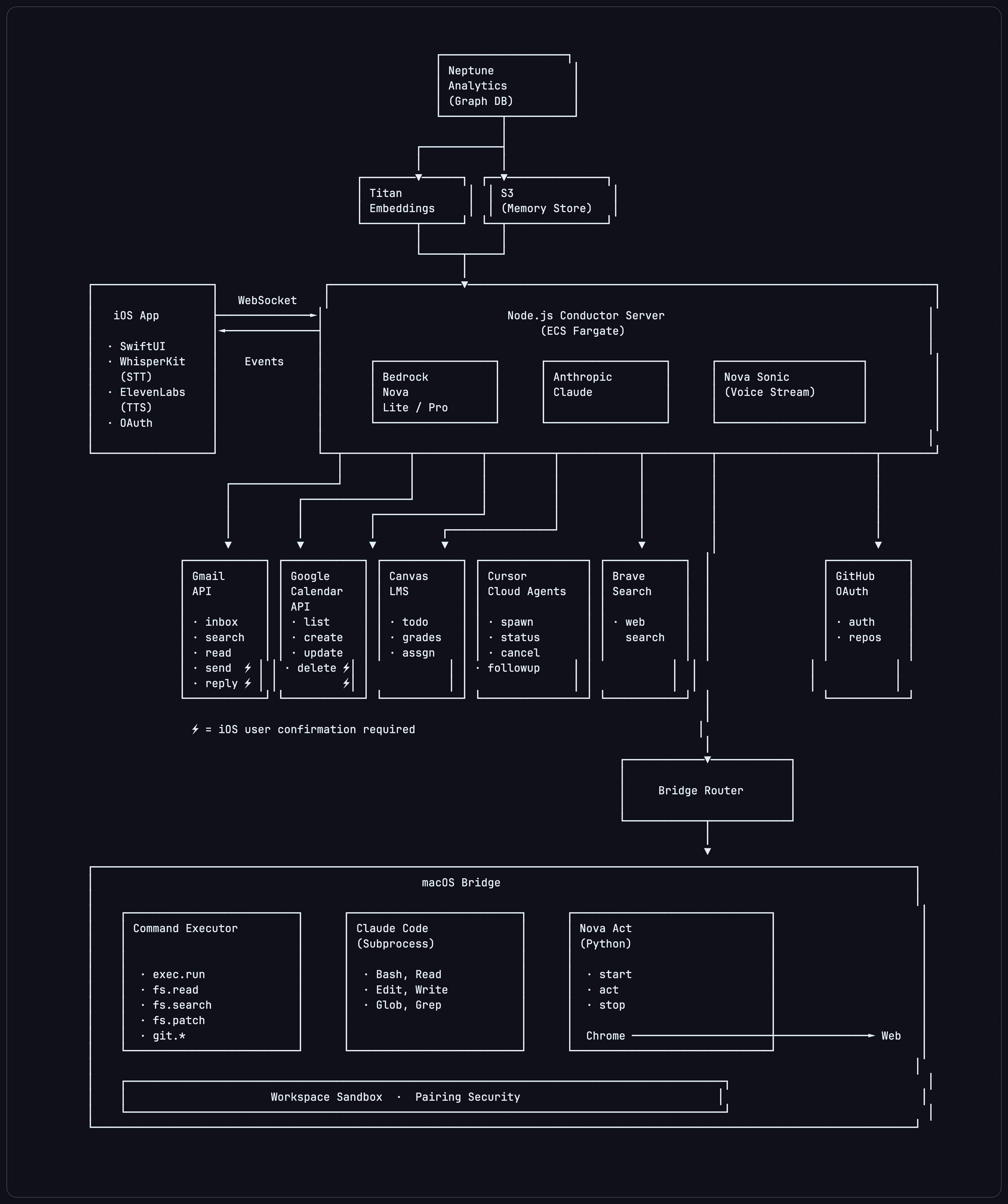

The architecture matters because the product promise depends on it: natural voice on the phone, strong tool orchestration on the server, and privileged local actions behind an explicit bridge.

The product is split across an iPhone-native client, a TypeScript conductor, and a paired macOS bridge so voice stays natural while privileged local execution stays gated.

Speech or text becomes structured events over WebSocket, tools run in the right place, and every result flows back into the transcript as readable inline cards and assistant messages.

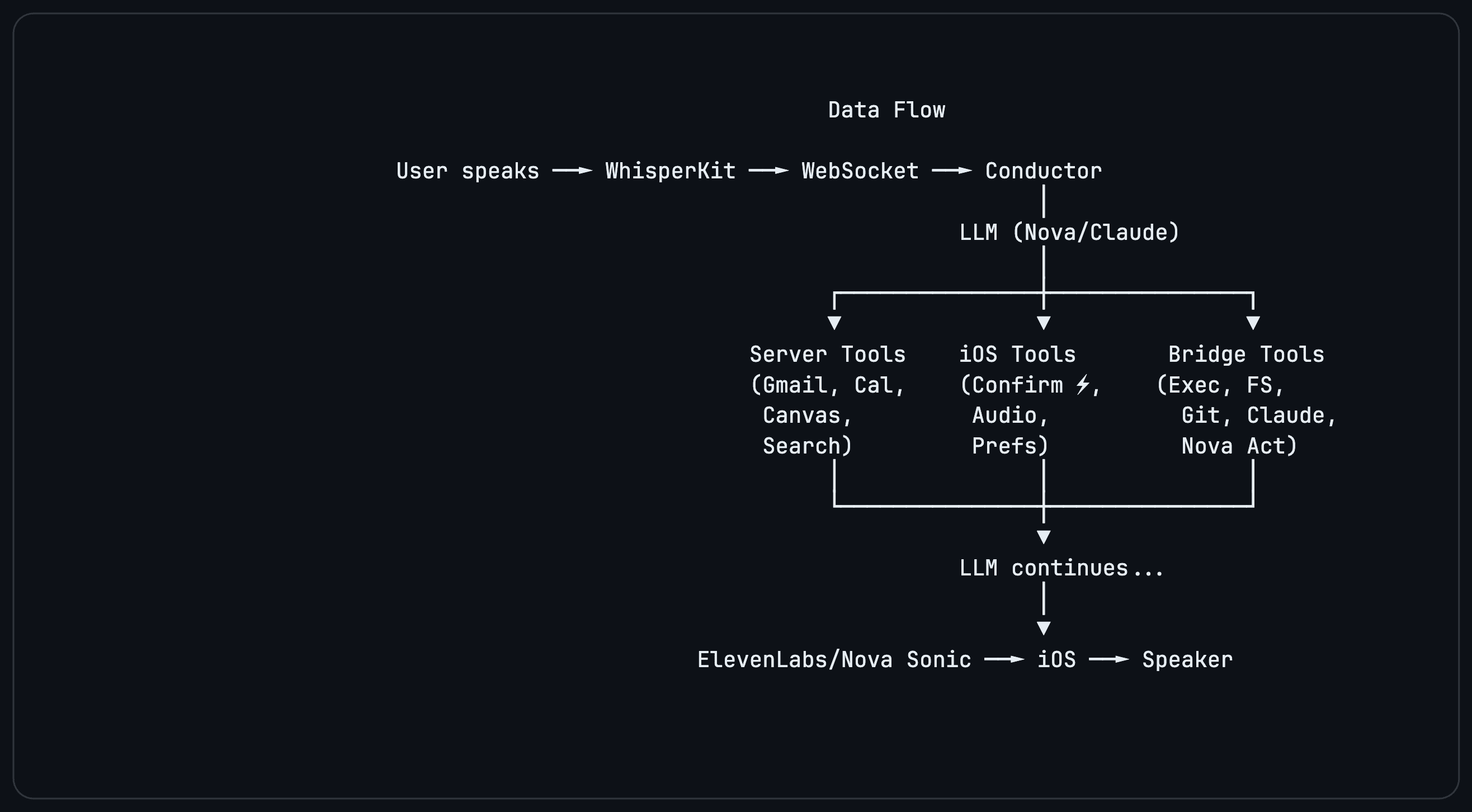

The stack is production-minded end to end: AWS Bedrock for models, ECS Fargate for the server, optional memory infrastructure, and Apple-native clients on the front line.

Tech and Connections

Abyss combines Apple-native UX, a TypeScript orchestration layer, Amazon Bedrock model routing, a permissioned Mac bridge, and a growing set of integrations that make the assistant feel useful across both development and day-to-day work.

The Pitch

“Abyss makes the phone the primary interface, keeps local execution permissioned, and turns voice into a serious surface for coding and everyday work.”This is the north star: the most capable, trusted, and personally useful assistant in your pocket, with the architecture to back up the claim.